Apica Wayfinder

The Test Data Engine for Agentic Journeys

Right Data. Right Size. Right Now. Self-service test data provisioning that's compliant by design and agentic by nature.

Agentic AI requires clean, compliant, right-sized test data at the speed of development but most teams wait days or weeks for centralized data teams to provision it. Wayfinder solves that.

Self service, AI assisted test data orchestration that turns test data from a bottleneck into a product. Teams provision right sized, compliant (often synthetic) data on demand, with automation that calculates optimal coverage and generates what tests need, so you can iterate agent workflows in sprint.

Test Data Management Creates Release Bottlenecks and Risk

Extended Wait Times

Oversized Data Footprints

Privacy & Compliance Risks

Incomplete Test Coverage

Limited Data for New Builds

Regulatory Constraints

Self-Service Test Data Orchestration with AI Intelligence

Apica Wayfinder transforms test data management from a centralized bottleneck into a self-service capability. Unlike traditional test data management tools that require specialized skills and coding, Wayfinder’s criteria-driven approach enables development and QA teams to provision right-sized, compliant test data on demand — no coding required, no waiting for central teams.

Why Wayfinder is architecturally different:

Self-Service Automation

Intelligent Filtering

AI-Powered Synthetic Data

Automatic Masking

API-Driven Orchestration

Context Retention

Think of Wayfinder as the ATM of Test Data: Input your criteria, press Go and get the data you need on demand. We don’t just make test data faster — we make it self-service, right-sized, right-coverage, and compliant from day one.

What Wayfinder Does

Self-Service Data Provisioning, On Demand and at Sprint Speed

AI-Powered Synthetic Data That Stays in Your Control

Compliance By Design, Not As an Afterthought

Agentic AI Readiness Built Into the Data Layer

DevOps-Native Orchestration That Fits Your Existing Stack

Criteria-Driven Test Data Pipeline

Intelligent Data Profiling and Subsetting

- Production data analysis: Profiles production and other data sources to identify valid patterns, relationships, and coverage requirements, with zero data privacy risk.

- Exact subsetting: Generates executable data requests that extract only the records needed, reducing 10M records to e.g. 21K without losing test coverage.

- Referential integrity: Maintains relationships across tables, databases, and systems automatically.

- Privacy by design: Filters and masks data before loading into non-production environments, reducing breach surface area by 90%+ replacing sensitive data with business-context-valid values which will be processed correctly in your applications.

AI-Powered Synthetic Data Generation

- Explainable AI (XAI): Transparent, user-controlled synthetic data generation; no black boxes, users see and control every framework.

- Automatic alignment: Synthetic data aligns seamlessly with masked production data; users control which aspects are synthetic vs. actual.

- Greenfield support: Ingests schemas and intel from design docs to generate valid synthetic data for new builds with no production data.

- Complex workflows: Handles intricate scenarios like payments processing engines, financial transactions, insurance policies and claims, and multi-service workflows.

Seamless Integration and Orchestration

- CI/CD integration: API-driven orchestration enables automated data refresh on every build or deployment.

- TDM tool compatibility: Works with existing test data management tools like IBM Optim, enhancing their value rather than replacing them.

- Agentic AI readiness: Agent-ready and prompt-compatible for watsonx Orchestrate and other agentic architectures.

- Multi-environment support: Provision data across development, QA, UAT, staging, and integration environments from one interface.

Two Pipelines, One Unified Approach

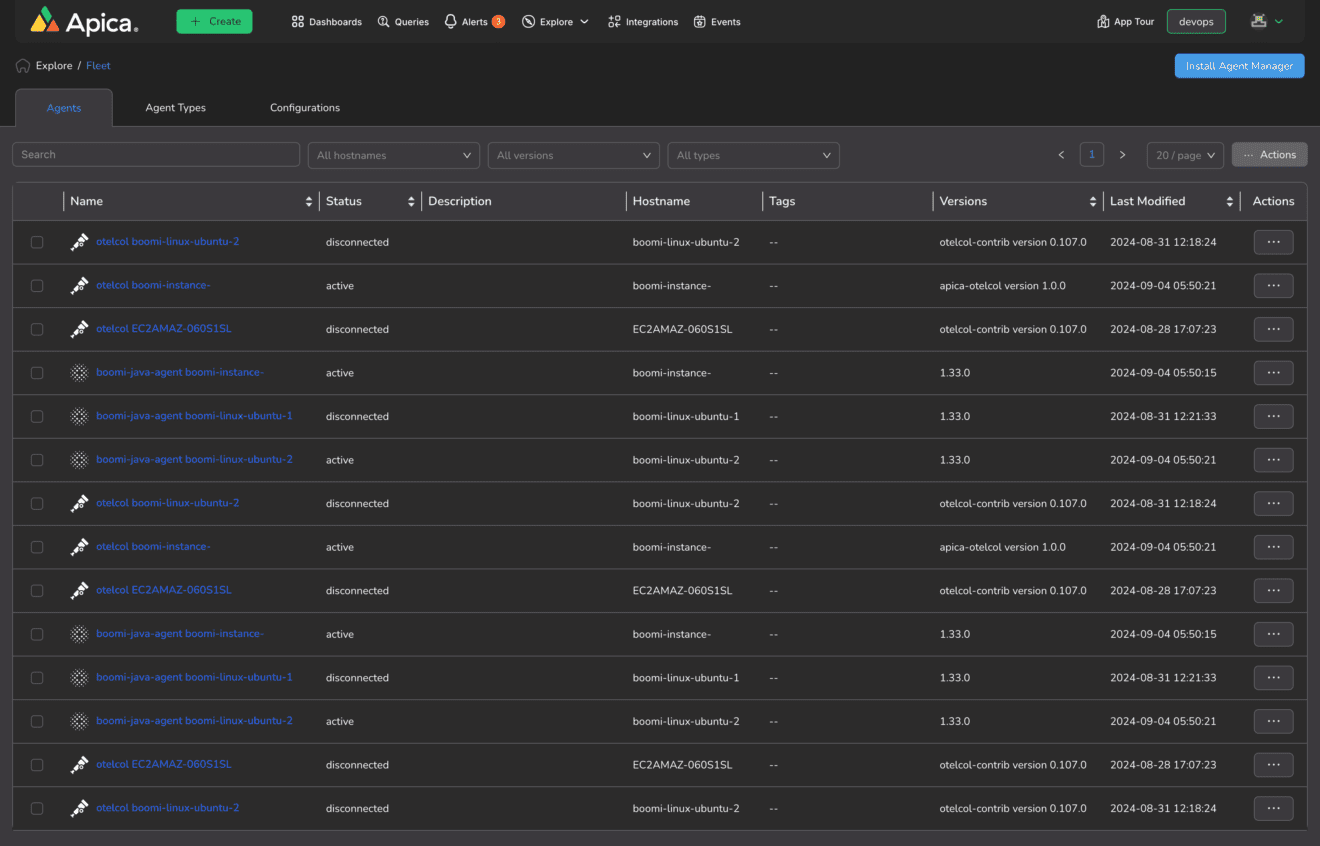

- Telemetry Pipeline (Apica Flow): Filters observability data (logs, metrics, traces) for production monitoring and alerting.

- Test Data Pipeline (Apica Wayfinder): Filters, orchestrates and enhances business data (customer records, transactions) for pre-production testing.

Why Wayfinder

Enterprise agility with reduced risk and cost — proven outcomes from organizations using self-service test data orchestration.

90%+ Reduction in Provisioning Wait Time

60–80% Reduction in Non-Production Storage Costs

90%+ Reduction in Data Breach Surface Area

Full Test Coverage, Fewer Defects

40–60% Faster Release Cadence

Explainable AI, Always in Control

No Coding Required

Complements IBM Optim & Existing TDM Tools

Enterprise Agility with Reduced Risk and Cost

Proven Test Data Orchestration benefits — organizations using Wayfinder achieve:

90%+

Reduction in test data provisioning wait time

90%+

Our teams waited 2–3 weeks for test data, with oversized production copies creating compliance headaches and cloud cost overruns.

After Wayfinder

IBM Partnership Benefits

- Complements IBM Optim: increases the value and usability of existing Optim investments through modern self-service capabilities

- Agentic AI on-ramp: provides IBM watsonx Orchestrate customers an easy path to agentic AI adoption with agent-ready, API-enabled architecture

- Exact subsetting: works with Optim or Data Stage to create precise data subsets, reducing storage and privacy risks while maintaining test coverage

Frequently Asked Questions

Does Wayfinder require coding skills?

How does Wayfinder work with existing tools like IBM Optim?

Can Wayfinder generate synthetic data for greenfield projects with no production data?

How does Wayfinder's explainable AI (XAI) differ from other synthetic data tools?

Can Wayfinder integrate with CI/CD pipelines?

What's the typical ROI for Wayfinder?

Works With Your Existing Stack

IBM Optim

Jenkins

GitHub Actions

Azure DevOps

IBM watsonx

Jira

Oracle

SQL Server

Don’t see your tool? Wayfinder works with any database, CI/CD tool, or test management platform.