- Solutions

INTEGRATIONS

Integrate with any data source, notify any service, authenticate your way, and automate everything.

- Products

Apica Product Overview

Apica helps you simplify telemetry data management and control observability costs. FleetFleet Management transforms the traditional, static method of telemetry into a dynamic, flexible system tailored to your unique operational needs. It offers a nuanced approach to observability data collection, emphasizing efficiency and adaptability.

FleetFleet Management transforms the traditional, static method of telemetry into a dynamic, flexible system tailored to your unique operational needs. It offers a nuanced approach to observability data collection, emphasizing efficiency and adaptability. Flow100% pipeline control to maximize data value while reducing observability spend by 40%. Collect, optimize, store, transform, route, and replay your observability data — however, whenever, and wherever you need it.

Flow100% pipeline control to maximize data value while reducing observability spend by 40%. Collect, optimize, store, transform, route, and replay your observability data — however, whenever, and wherever you need it. LakeApica’s data lake (powered by InstaStore™), a patented single-tier storage platform that seamlessly integrates with any object storage. It fully indexes incoming data, providing uniform, on-demand, and real-time access to all information.

LakeApica’s data lake (powered by InstaStore™), a patented single-tier storage platform that seamlessly integrates with any object storage. It fully indexes incoming data, providing uniform, on-demand, and real-time access to all information. ObserveThe most comprehensive and user-friendly platform in the industry. Gain real-time insights into every layer of your infrastructure with automatic anomaly detection and root cause analysis.Synthetic MonitoringUnlock the power of synthetic monitoring with Apica. Tailored for enterprises, our robust monitoring tool delivers predictive insights into the performance and uptime of your critical assets – websites, applications, APIs, and IoT.Test Data OrchestratorApica Test Data Orchestrator (TDO) transforms test data management with self-service automation and AI-driven intelligence, eliminating delays and enabling teams to provision right-sized, compliant test data on demand.IronDBApica’s IronDB is a Time Series Database (TSDB) that offers unparalleled reliability, scalability, and speed, addressing the core needs of modern IT monitoring.

ObserveThe most comprehensive and user-friendly platform in the industry. Gain real-time insights into every layer of your infrastructure with automatic anomaly detection and root cause analysis.Synthetic MonitoringUnlock the power of synthetic monitoring with Apica. Tailored for enterprises, our robust monitoring tool delivers predictive insights into the performance and uptime of your critical assets – websites, applications, APIs, and IoT.Test Data OrchestratorApica Test Data Orchestrator (TDO) transforms test data management with self-service automation and AI-driven intelligence, eliminating delays and enabling teams to provision right-sized, compliant test data on demand.IronDBApica’s IronDB is a Time Series Database (TSDB) that offers unparalleled reliability, scalability, and speed, addressing the core needs of modern IT monitoring. - Resources

Videos

Dive into valuable discussions and get to know our company through exclusive video content.Events & Webinars

Join us for live and virtual events featuring expert insights, customer stories, and partner connections. Don’t miss out on valuable learning opportunities!

DOCUMENTATION

Find easy-to-follow documentation with detailed guides and support to help you use our products effectively. - Company

About Us

Apica keeps enterprises operating. The Ascent platform delivers intelligent data management to quickly find and resolve complex digital performance issues before they negatively impact the bottom line.Security

In a world in constant motion where threat actors are everywhere it is important to always improve the security in all parts of your organization. We believe that is done by leveraging industry best practices and adopting the latest technology. We are proud to be both ISO27001 and SOC2 certified and thus your data is safe and secure with us.News

Stay updated with the latest news and press releases, featuring key developments and industry insights.

Leadership

Meet our leadership team, dedicated to driving innovation and success. Discover the visionaries behind our company’s growth and strategic direction.Apica Partner Network

Join the Apica Partner Network and collaborate with industry leaders to deliver cutting-edge solutions. Together, we drive innovation, growth, and success for our clients.Careers

Build your future with us! Explore exciting career opportunities in a dynamic environment that values innovation, teamwork, and professional growth. - Login

Get Started Free

Get Enterprise-Grade Data Management Without the Enterprise Price Tag Manage Your Data Smarter – Start for FreeLoad Test Portal

Ensure seamless performance with robust load testing on Apica’s Test Portal powered by InstaStore™. Optimize reliability and scalability with real-time insights.

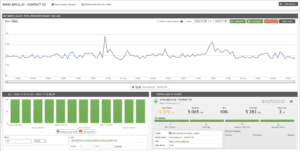

Monitoring Portal

Access the Monitoring Portal (powered by InstaStore™) to view live system performance data, monitor key metrics, and quickly identify any issues to maintain optimal reliability and uptime.