Store Billions of Unique Metric Streams Without Performance Penalties or Cost Explosions

The High-Cardinality Crisis in Cloud-Native Environments — And AI Is About to Make It Exponentially Worse

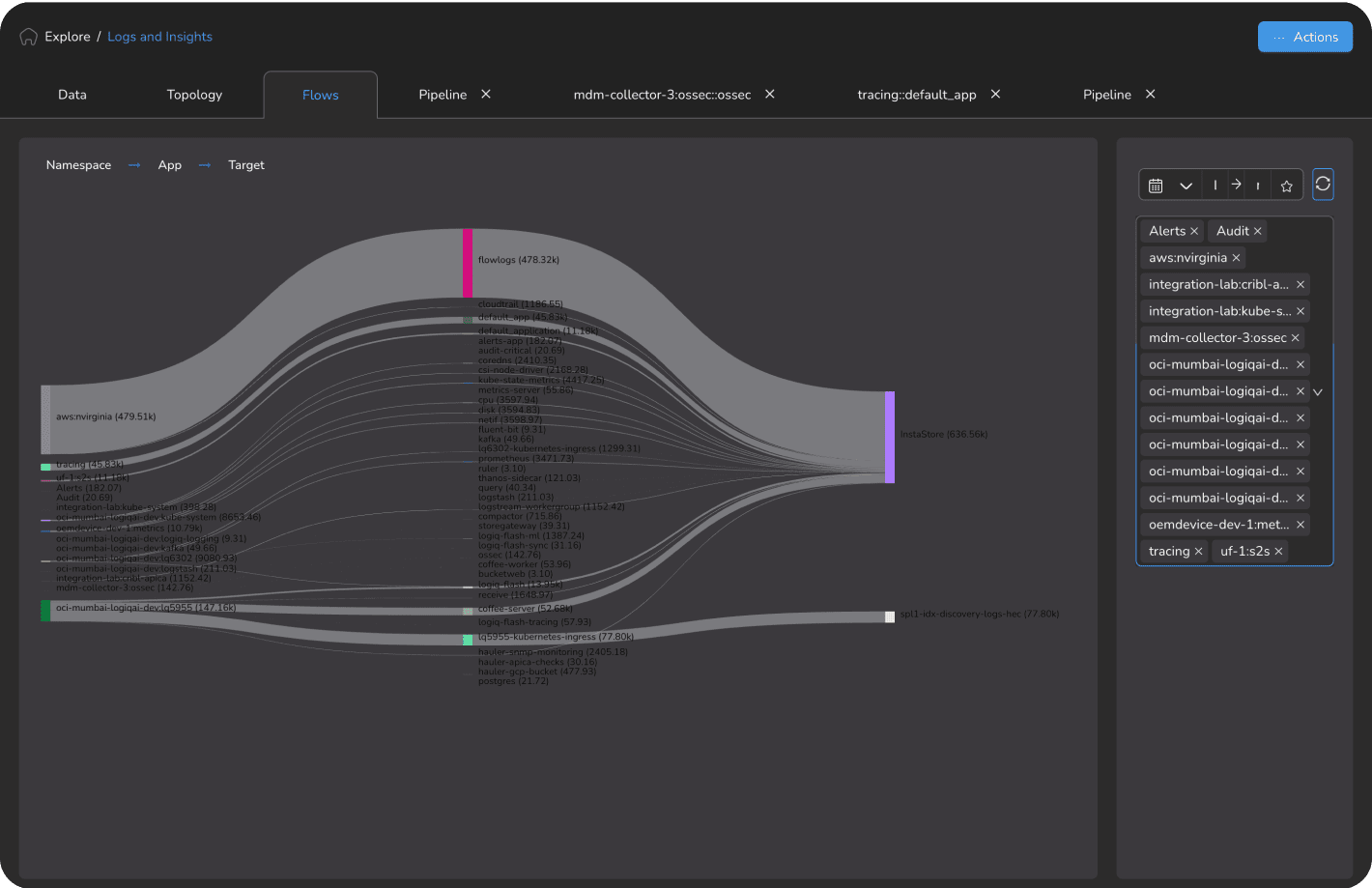

Cloud-native and microservices architectures have fundamentally changed the metrics landscape. Kubernetes deployments with hundreds of ephemeral pods, containerized applications with dynamic service meshes, and auto-scaling infrastructure generate explosive cardinality growth — millions of unique time series metrics with dozens of tags and dimensions per metric. Traditional time series databases weren’t designed for this reality.

AI agents compound the cardinality problem in ways that general-purpose time series databases (TSDBs) are fundamentally unable to address. Every AI agent in production generates per-token costs, per-request latency histograms, tool invocation rates, and multi-agent call chain metrics, continuously, at machine speed, at cardinality levels that break platforms built for human-scale infrastructure monitoring. The organizations deploying AI agents today need a metrics foundation engineered for this reality. Apica Forge is purpose-built for exactly this.

-

Cardinality management challenges

Competing platforms often tie cardinality limits to pricing tiers, forcing trade-offs between monitoring granularity and cost.

-

Query performance degradation

Traditional TSDBs slow to a crawl when querying across high-cardinality dimensions — minutes to return results that should take milliseconds.

-

Rapidly escalating cost scaling

Per-metric or per-custom-metric pricing models penalize cloud-native architectures, with costs growing unsustainably as Kubernetes environments scale — a pattern Apica customers consistently report.

-

Premature aggregation

Teams forced to aggregate data at collection time, destroying granularity and creating blind spots during incident investigation.

-

Tag anxiety

Engineers self-censor meaningful labels to stay within cardinality budgets, reducing observability value.

-

AI metrics blindness

General-purpose TSDBs can't handle the cardinality and frequency of AI agent metric signals, per-token costs, latency distributions, tool call rates, without degradation or cost explosion.value.

Apica Forge: Built for Infinite Cardinality — Including Your AI Agents

Apica Forge is purpose-built for the cardinality realities of modern cloud-native infrastructure and the AI workloads running on top of it. No artificial limits. No per-metric pricing. No aggregation trade-offs. Store billions of unique metric streams, including the AI-specific signals that break general-purpose TSDBs, with consistently fast query performance regardless of cardinality.

- Cardinality limits: Artificial caps force trade-offs between monitoring completeness and cost

- Query performance degradation: Traditional TSDBs slow dramatically as unique metric combinations grow

- Exponential cost scaling: Per-metric pricing penalizes cloud-native architectures that generate high cardinality

- Forced aggregation: Destroying granularity at collection time creates permanent blind spots

- Tag anxiety: Engineers self-censoring meaningful labels to avoid cardinality cost spikes

- AI metrics gap: No TSDB built to handle per-token costs, agent latency histograms, and tool invocation rates at the cardinality and frequency AI agents generate in production

- No cardinality limits: Store billions of unique metric streams without performance penalties or cost explosions

- Consistent query performance: Millisecond response times regardless of unique metric stream count

- Architecture-aware pricing: No per-metric charges that penalize Kubernetes and microservices scale — pricing is structured to scale with actual usage; contact the Apica team for details on what's right for your environment

- Full granularity preserved: Collect at full resolution, aggregate on query — never at collection time

- 4,000-character tag support: Use meaningful, descriptive labels without artificial constraints

- AI-native metrics foundation: Store per-token costs, latency histograms, tool invocation rates, and multi-agent call chain metrics at full resolution — the cardinality-scale metrics backbone that agentic infrastructure demands

The Apica advantage: We eliminate the cardinality tax, store every metric stream at full resolution without compromising query performance or breaking your budget. And we're the metrics foundation your AI agents can actually depend on.

Infinite Cardinality, Consistent Performance

Apica Forge's architecture was designed from the ground up for the high-cardinality realities of cloud-native observability, delivering millisecond query performance regardless of how many unique metric streams you generate. And because Forge was explicitly built for AI-native metric signals, it extends the same unlimited cardinality and millisecond performance to the agentic workloads now entering production.

Apica Forge Time Series Engine

- Purpose-built TSDB handling billions of unique time series without performance degradation

- Metric names and tags supporting up to 4,000 characters — no artificial truncation

- No per-metric or per-custom-metric pricing constraints: Pricing scales with actual usage; contact the Apica team for the model that fits your environment

- Consistent millisecond query performance at any cardinality scale

Kubernetes-Scale Cardinality

- Handle millions of unique metric combinations from ephemeral pod environments

- Every pod, container, deployment, namespace, and node fully monitored without cardinality limits

- Service mesh metrics at full granularity across all interactions

- Auto-scaling doesn't create cardinality explosions or cost spikes

Full-Resolution Collection

- Collect metrics at full resolution — never pre-aggregate or downsample at collection time

- Apply aggregation only when querying — preserve all granularity for incident investigation

- Dynamic rollup policies applied retrospectively without data loss

- Compare current behavior against any historical point at full resolution

Query Performance at Scale

- Millisecond query response across billions of unique metric streams

- Efficient storage architecture that doesn't degrade as cardinality grows

- Multi-dimensional queries across any tag combination without performance penalties

- Historical data accessible at consistently fast performance. No archival delay in standard deployments

AI-Native Metrics and SLO Signals (Powered by Apica Forge)

Apica Forge was built to be the metrics backbone for agentic infrastructure, storing and querying the signals that AI agents generate at machine speed:

- Per-token costs: Track LLM API costs at per-request granularity across all models and features. No aggregation that hides cost spikes

- Per-agent latency histograms: Store full latency distributions for every AI agent interaction, calculate accurate P95/P99 without raw sample retention

- Tool invocation rates: Monitor every tool call, retrieval operation, and API interaction at full cardinality: Per agent, per model, per feature

- Multi-agent call chain metrics: Track the metric signals that emerge from complex agentic workflows, cardinality that breaks general-purpose TSDBs

- SLO signals at agentic scale: Define and track Service Level Objectives against agentic reliability contracts, powered by Forge's high-cardinality metrics engine

- Continuous operations under failure: Multi-node replication keeps Forge ingesting and serving queries even during node failures. No gaps in agent telemetry, no alerting blind spots

No Cardinality Limits. No Performance Penalties. No Surprises.

Results based on Apica customer deployments. Individual results may vary based on environment complexity and implementation scope.

Cloud-Native SaaS: Platform Engineering

Kubernetes deployment generating 500M+ unique metric streams. Prometheus hit cardinality limits and failed under load. Datadog costs grew rapidly, driven by per-metric cardinality pricing.

Apica Forge as the high-cardinality metrics backend, replacing Prometheus for Kubernetes-scale time series storage.

- 100% cardinality coverage — every metric stream captured with no limits or sampling

- Query response time improved from minutes to under 50ms at full scale

- 65% reduction in metrics storage costs vs. per-metric pricing platform

- Engineering team removed tag self-censorship — full context for every incident

Results based on Apica customer deployments. Individual results may vary based on environment complexity and implementation scope.

Financial Services: Observability Platform Team

Multi-region trading platform with 2B+ unique metric streams across microservices. Legacy TSDB degraded to 5-minute query times at scale.

Apica Forge replacing legacy TSDB for high-cardinality financial metrics with consistent query performance.

- 2B+ metric streams stored with consistent sub-100ms query performance

- Zero metric loss during peak trading cardinality events

- 78% reduction in TSDB operational overhead

- Root cause analysis time reduced from hours to minutes with full-granularity access

Built for Cardinality at Enterprise Scale — Including the AI Workloads Generating It

Apica Forge wasn't retrofitted for high cardinality — it was architected from scratch to handle the metric reality of modern cloud-native infrastructure. That same unlimited cardinality and millisecond performance extends naturally to the AI agents now entering production environments, handling the per-token costs, latency histograms, and tool invocation rates that general-purpose TSDBs can't sustain at scale.

Architecture-Native Cardinality

Not a traditional TSDB with cardinality bolted on — Apica Forge's storage architecture is designed for billions of unique time series. No performance degradation, no artificial limits, no scaling surprises.

Pricing That Scales with Value

No per-metric or per-custom-metric charges that penalize cloud-native architectures. Predictable costs that don't explode as Kubernetes generates more unique metric combinations. Pricing is structured to scale with actual usage — contact the Apica team to discuss the right model for your environment.

Full Granularity Always

Never pre-aggregate or downsample at collection time. Collect at full resolution, apply aggregation on query. Full context for incident investigation at any historical point.

4,000-Character Tag Support

Use descriptive, meaningful tag names without artificial constraints. Stop self-censoring important context to stay within cardinality budgets — label everything that matters.

The Metrics Backbone for Agentic AI

Apica Forge was built to be where metrics become the foundation for agentic intelligence. Per-token costs, per-agent latency histograms, tool invocation rates, multi-agent call chain metrics — stored at full resolution, queryable in milliseconds, at the cardinality levels that production AI agents generate. The new SLO dashboard in Ascent 2.16 uses Forge's high-cardinality metrics engine to track reliability contracts for AI-integrated services — not just traditional uptime metrics. Forge is the metrics layer that scales from Kubernetes to agentic AI without architectural compromise..

Go Deeper

Related blog posts, product pages, and documentation for platform teams dealing with high-cardinality metrics.

Kubernetes Monitoring: Best Practices, Metrics and Tools

Read article →White Paper: High Cardinality: Rethinking Observability for Cloud-native Systems

Read the docs →

Apica Forge

Real-time high-cardinality metrics with SLO insights

Explore Apica Forge →

High-Cardinality Metrics at Scale

Explore Use Case →