Ensure Performance, Reliability, and Compliance for AI Applications

AI Applications Demand New Observability Approaches

As organizations rapidly adopt generative AI and large language models, they’re discovering that traditional observability tools weren’t built for AI workloads. LLM applications introduce unique challenges: non-deterministic outputs, token-based costs that can spiral unpredictably, complex multi-step agentic workflows, and latency requirements that directly impact user experience.

And as those LLM applications mature into fully autonomous agentic systems, the challenge compounds further. AI agents don’t just generate outputs — they generate continuous streams of telemetry, tool call records, reasoning traces, and decision logs that existing observability pipelines weren’t architected to handle. The organizations that build the right telemetry infrastructure now will be the ones that can operate agentic AI reliably and affordably at scale.

-

Unpredictable costs

Token usage and API calls create variable costs that are difficult to forecast and control.

-

Performance blind spots

Traditional APM doesn't capture prompt quality, model accuracy, or inference latency specific to LLMs.

-

Compliance risks

Difficulty monitoring for bias, inappropriate outputs, or data privacy violations in AI responses.

-

Debugging complexity

Non-deterministic outputs make it challenging to reproduce issues and understand failure modes.

-

Agentic workflow visibility

Multi-step AI agent chains require end-to-end tracing across model calls, tool usage, and retrieval systems.

-

Telemetry pipeline gaps

AI agents generate 10–100x more telemetry than traditional applications — most organizations have no cost-efficient way to route, govern, and store it without breaking their observability budget.

Comprehensive LLM and AI Application Monitoring — With Pipeline-First Telemetry Governance

Apica delivers purpose-built observability for AI and LLM workloads, providing complete visibility into model performance, costs, quality, and compliance — and goes further with a pipeline-first layer that governs the telemetry AI agents generate, routing, filtering, enriching, and storing it cost-efficiently before it reaches expensive indexing platforms.

- Unpredictable costs: No visibility into token usage until bills arrive; no per-feature or per-model attribution

- Performance blind spots: Traditional APM can't capture prompt quality, inference latency, or model accuracy

- Compliance gaps: No monitoring for bias, inappropriate outputs, or PII in AI responses

- Debugging complexity: Non-deterministic outputs make issue reproduction nearly impossible

- Agentic workflow opacity: Multi-step AI chains have no end-to-end tracing or visibility

- Telemetry cost explosion: AI agent workloads generate 10–100x more telemetry with no cost-efficient pipeline to handle it — every trace, reasoning log, and tool call hits expensive ingestion at full price

- LLM-specific metrics: Track token usage, cost per request, inference latency, and model accuracy in real time

- Agentic workflow tracing: End-to-end visibility across multi-step AI agent chains and tool usage

- Prompt and response monitoring: Capture and analyze prompt quality, response accuracy, and output appropriateness

- Cost attribution: Understand AI spending by model, feature, customer, or user cohort

- Compliance monitoring: Continuous validation of AI outputs against policies and regulatory requirements

- Pipeline-first AI telemetry governance: Filter, enrich, and route AI agent telemetry before costly platform ingestion — controlling the cost of observing AI, not just the cost of running it

The Apica advantage: We give AI engineering teams the insights they need to optimize performance, control costs, and maintain compliance — without waiting for monthly billing surprises or building a second telemetry infrastructure for AI workloads.

Complete Visibility Into AI Workloads

Apica captures the metrics, traces, and behavioral signals that matter for AI applications — from individual LLM calls through multi-step agentic workflows — while governing the telemetry pipeline that carries all of it.

LLM Performance Monitoring

- Track inference latency, throughput, and error rates per model and deployment

- Monitor token usage patterns and identify optimization opportunities

- Detect performance regressions when model versions change

- Baseline normal behavior and alert on deviations

Token Cost Attribution

- Real-time cost tracking by model, feature, team, and customer cohort

- Budget alerting before spend spirals out of control

- Identify high-cost prompts and optimize for efficiency

- ROI reporting for individual AI features and use cases

Agentic Workflow Tracing

- End-to-end tracing across multi-step AI agent chains

- Visibility into tool calls, retrieval operations, and model interactions

- Identify bottlenecks and failures in complex agentic pipelines

- Full context for debugging non-deterministic outputs

Compliance & Quality Monitoring

- Continuous monitoring of AI outputs for policy violations and inappropriate content

- PII detection in prompts and responses before they reach storage

- Prompt quality scoring and response accuracy tracking

- Audit trails for regulatory compliance across all AI interactions

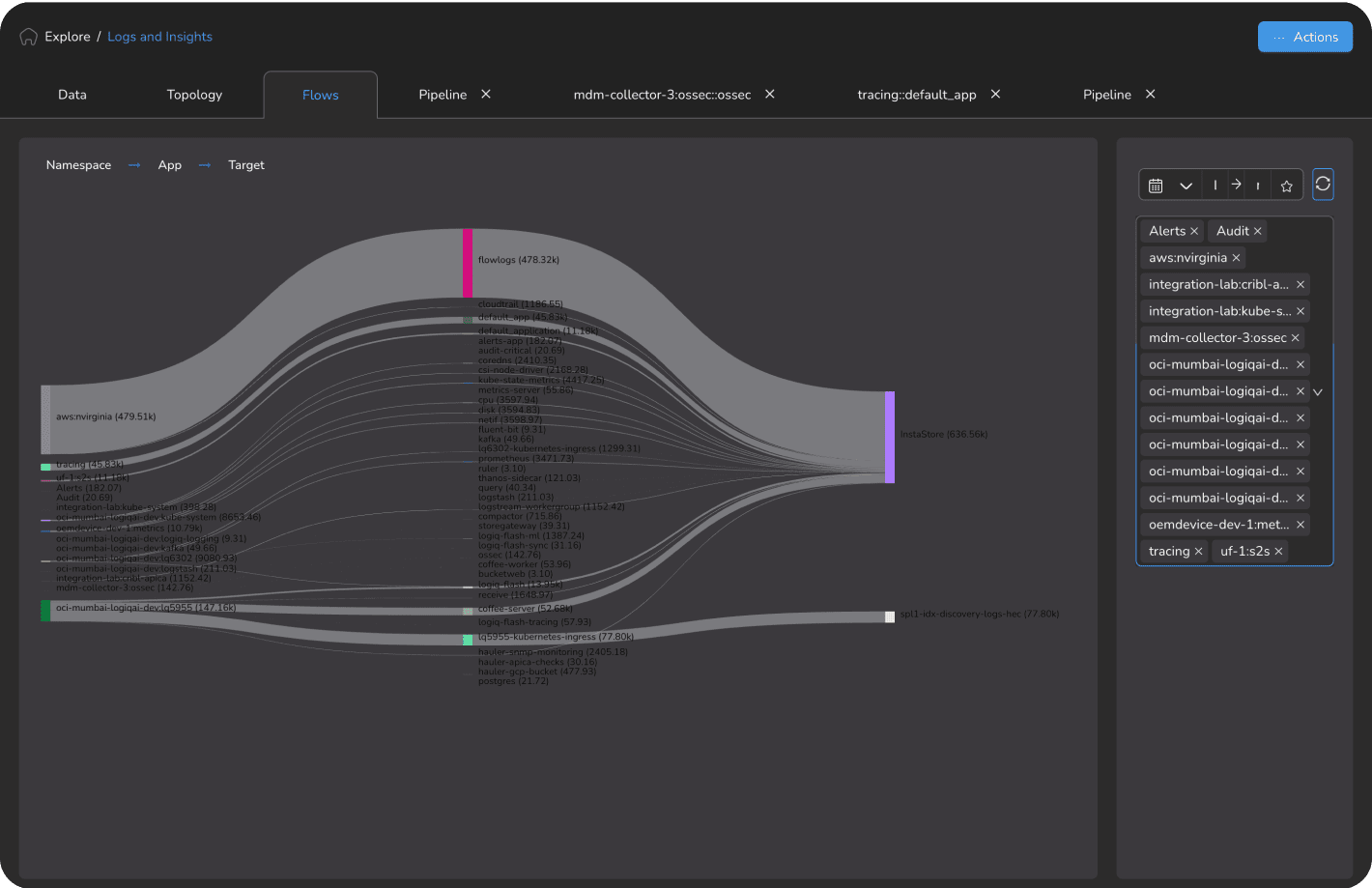

AI Telemetry Pipeline Governance (Powered by Apica Flow)

As AI agents scale from POC to production, the telemetry they generate becomes as much a cost problem as a visibility problem. Apica Flow brings pipeline-first control to AI workloads:

- Filter routine LLM output logs and tool call noise before they hit expensive indexing tiers

- Route AI agent traces, reasoning logs, and decision records to cost-optimized storage with full replay capability

- Apply dynamic sampling policies that preserve agent decision traces and anomaly signals while filtering high-volume routine outputs

- Store AI agent interaction histories and LLM telemetry at object storage economics via InstaStore™ — with instant queryability when you need to audit, retrain, or replay

- Enrich AI telemetry with business context (customer tier, session ID, feature flag) before routing to downstream analytics

AI Validation via Synthetic Monitoring (Apica Vanguard)

Synthetic checks provide the known-result validation signals that agentic systems depend on to confirm they're operating correctly:

- Simulate real user and AI agent workflows globally to catch degradation before it reaches production

- Use synthetic probe results as ground-truth signals to detect AI hallucination and verify autonomous decision outputs

- Expose synthetic check data as a native stream in Apica Flow — apply full pipeline governance including filtering, enrichment, and cost routing

- Validate agentic journey completion rates end-to-end, not just individual model call success

AI Applications You Can Trust and Afford

Results based on Apica customer deployments. Individual results may vary based on environment complexity and implementation scope.

Enterprise SaaS: AI Feature Team

$45K/month in LLM API costs with no visibility into which features or customers drove spend. Costs growing 40% month-over-month.

Apica LLM observability for token cost attribution, prompt quality monitoring, and agentic workflow tracing.

- 62% reduction in LLM API costs through prompt optimization and model routing

- Per-feature cost attribution enabled ROI analysis for every AI capability

- Compliance monitoring identified prompt injection attempts in first week

- Inference latency improved 38% after identifying inefficient retrieval patterns

Results based on Apica customer deployments. Individual results may vary based on environment complexity and implementation scope.

Financial Services: AI Risk Platform

Regulatory requirement to prove AI outputs meet compliance standards with no tooling to monitor LLM responses for policy violations.

Apica compliance monitoring with full audit trails for all AI interactions and automated policy violation detection.

- 100% compliance audit coverage across all AI-generated outputs and decisions

- Policy violation detection reduced manual review burden by 78%

- Met regulatory audit requirements with complete AI decision trails

- PII in prompts detected and redacted before storage in 100% of cases

Built for the AI Observability Gap — And the Telemetry Pipeline Behind It

Traditional observability platforms weren't designed for generative AI. Apica provides the LLM-native telemetry and analysis capabilities that AI engineering teams need — plus the pipeline-first architecture that governs the telemetry AI agents generate at scale. That combination is what separates Apica from point-solution LLM monitoring tools that observe AI but can't control the cost of doing so.

LLM-Native Observability

Captures the metrics that matter for AI — token usage, prompt quality, inference latency, model accuracy — not just generic APM metrics that miss what's unique about LLM workloads.

Real-Time Cost Control

Per-request cost attribution across models, features, customers, and teams. Budget alerting before spend spirals. No more monthly billing surprises from runaway AI usage.

Agentic-Ready Tracing

End-to-end tracing across multi-step AI agent chains. Full visibility into tool calls, retrieval operations, and model interactions — the complexity that matters as AI workflows grow.

Compliance by Design

Continuous monitoring for policy violations, PII in prompts, bias in outputs, and inappropriate responses. Complete audit trails for regulatory compliance. AI you can prove is safe.

Pipeline-First AI Telemetry

The only AI observability platform with a pipeline-first architecture purpose-built for AI-era data volumes. Filter, route, and store AI agent telemetry cost-efficiently — handling 10–100x volume growth without a proportional spike in observability spend. InstaStore™ provides infinite, instantly queryable retention for prompt histories, agent decision logs, and compliance records. Observe your AI and govern it, in one platform.

Synthetic AI Validation

Vanguard's synthetic monitoring extends into the AI stack, simulating real user and AI agent workflows to validate that autonomous systems are performing within expected parameters. Known-result synthetic signals detect hallucination and verify agentic decision outputs — before they reach your users. New in Ascent 2.16: synthetic check data is now a native stream in Apica Flow, enabling full pipeline governance of your AI validation signals.

Go Deeper

Related blog posts, product pages, and documentation for AI engineering teams.

Apica Telemetry Pipeline at AWS re:Invent 2025: Powering AI, Security, and Cost Optimization in the Cloud

Read article →

OpenTelemetry: The Foundation of Modern Observability Strategy

Read article →OpenTelemetry Best Practices

Read the docs →Common Use Cases for OpenTelemetry

Read the docs →

Apica Vanguard

Synthetic monitoring that simulates real user and AI agent workflows globally.

Explore the product →

Apica Wayfinder

On-demand, compliant test data for agentic journeys.

Explore the product →