Kubernetes-Native Observability for Modern Infrastructure

Platform Complexity Outpaces Traditional Monitoring — And AI Is About to Compound It

Platform engineering teams managing modern Kubernetes environments face unprecedented observability challenges. Traditional monitoring tools were built for static infrastructure, not ephemeral containers that spin up and down in seconds. The dynamic nature of cloud-native platforms — combined with multi-cloud deployments and microservices architectures — creates visibility gaps that impact reliability and slow down incident response.

Now factor in agentic AI. As organizations deploy AI agents, those agents spawn new microservices dynamically, multiplying the cardinality problem that already strains legacy tools. The same Kubernetes-native, elastic, high-cardinality architecture that handles modern container orchestration is exactly what agentic AI infrastructure demands. Platform teams that get this right now won’t have to rebuild it when AI agents reach production scale.

-

Dynamic infrastructure

Kubernetes pods are ephemeral; traditional monitoring loses track of short-lived containers.

-

High cardinality explosion

Container orchestration creates millions of unique metric combinations that overwhelm legacy tools.

-

Multi-cloud complexity

Managing observability across AWS, Azure, GCP, and on-premises infrastructure fragments visibility.

-

Platform sprawl

Average organizations use 10+ monitoring tools creating operational overhead and blind spots.

-

Resource optimization

Difficulty understanding actual resource utilization versus requests/limits leads to waste or performance issues.

-

Agentic AI amplification

AI agents deployed on Kubernetes infrastructure spawn microservices dynamically, generating 10–100x more telemetry than traditional workloads, compounding every existing cardinality and cost challenge.

Elastic, Kubernetes-Native Observability — Built for Today's Infrastructure and Tomorrow's AI Workloads

Apica delivers observability built from the ground up for cloud-native platform operations. Our Kubernetes-native architecture automatically adapts to dynamic infrastructure changes, handles high cardinality data efficiently, and provides unified visibility across multi-cloud and hybrid environments, giving platform engineering teams complete control without complexity. And because Apica's architecture was designed for the cardinality demands of container orchestration, it's already built to handle the telemetry volumes that agentic AI workloads will generate on top of it.

- Dynamic infrastructure blind spots: Traditional monitoring loses track of ephemeral containers before metrics are captured

- High cardinality limits: Legacy TSDBs fail under the metric volume of modern Kubernetes deployments

- Multi-cloud fragmentation: Separate tools per cloud create incomplete, inconsistent visibility

- Platform sprawl: 10+ monitoring tools create operational overhead and coverage gaps

- Resource waste: Inability to accurately understand utilization vs. requests leads to over-provisioning

- Agentic AI blindness: No mechanism to auto-discover and monitor AI agent-spawned microservices as they spin up dynamically on Kubernetes infrastructure

- Kubernetes-native architecture: Purpose-built to monitor dynamic container orchestration at scale

- High cardinality support: Advanced data handling designed for millions of unique metric combinations

- Multi-cloud visibility: Unified observability across AWS, Azure, GCP, and on-premises infrastructure

- Elastic scalability: Instant throughput on-demand matches your infrastructure growth

- Platform engineering workflows: Tools designed for teams managing shared services and infrastructure

- Agentic-ready infrastructure layer: Auto-discovery and monitoring of AI agent-spawned services, with elastic architecture that scales to 10–100x telemetry growth without manual reconfiguration or cost explosion

The Apica advantage: Monitor modern platforms with observability that scales and adapts as fast as your infrastructure does and as fast as your AI agents spawn new services on top of it.

Complete Cloud-Native Visibility

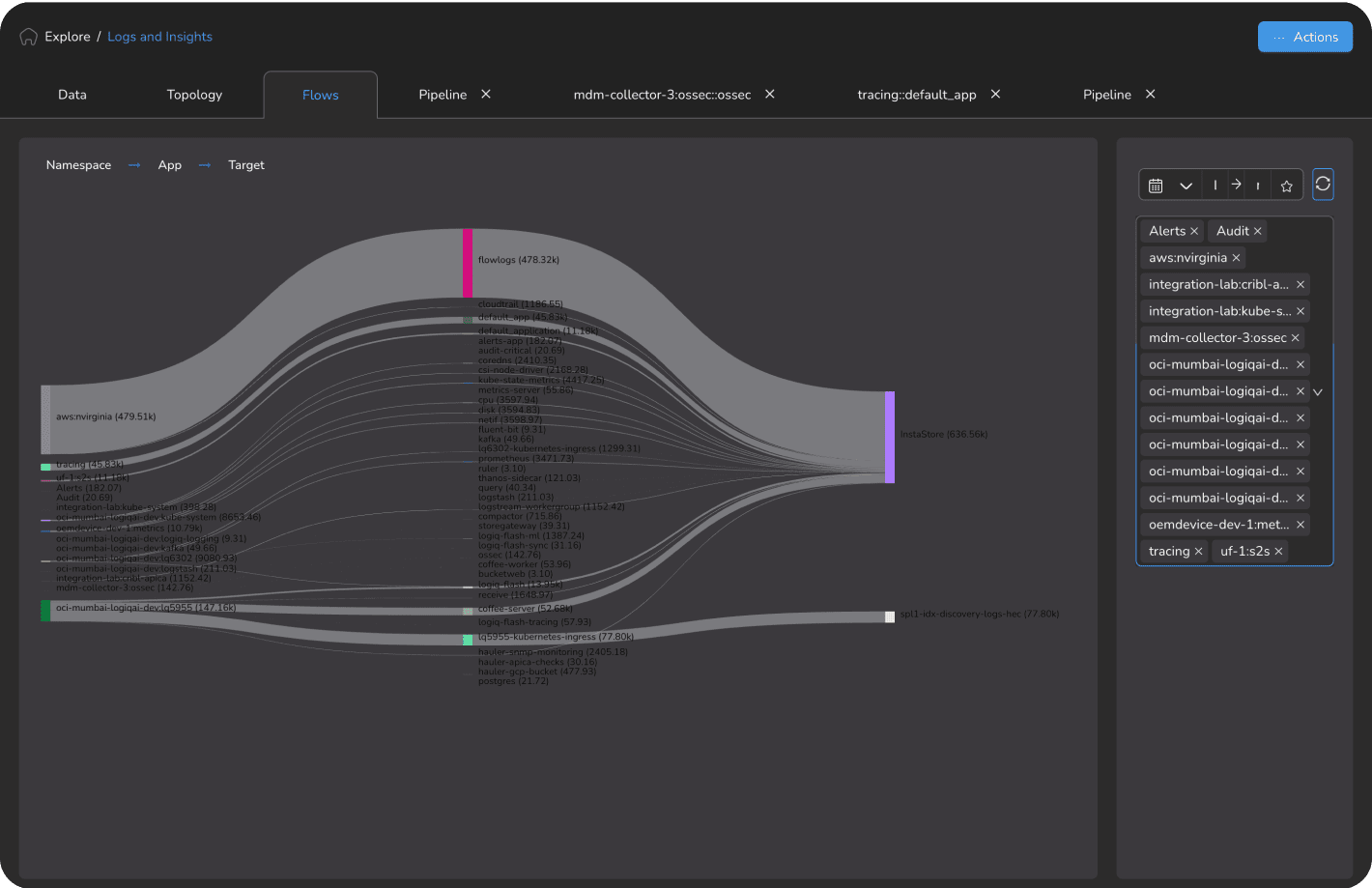

Apica delivers Kubernetes-native monitoring that adapts automatically to your dynamic infrastructure, from individual container metrics through multi-cloud topology mapping, and extends seamlessly to the AI agent workloads running on that infrastructure.

Kubernetes-Native Monitoring

- Automatic discovery of all pods, containers, deployments, namespaces, and nodes

- Dynamic monitoring adapts to ephemeral workloads without manual configuration

- Service dependency mapping across complex microservices architectures

- Full visibility into Kubernetes events, resource limits, and scheduling decisions

High Cardinality Metrics at Scale

- Handle millions of unique metric streams without performance degradation

- No artificial cardinality limits that force you to sacrifice observability context

- Efficient storage and querying without performance degradation as scale grows

- Powered by Apica Forge — purpose-built for high-cardinality time series at enterprise scale

Multi-Cloud Unified Visibility

- Single platform for AWS, Azure, GCP, and on-premises infrastructure

- Consistent metric, log, and trace collection across all cloud environments

- Cross-cloud topology visualization and dependency mapping

- Unified alerting regardless of where workloads run

Container Resource Optimization

- Understand actual resource utilization vs. requests and limits across all namespaces

- Identify over-provisioned workloads and reclaim wasted cloud spend

- Capacity planning based on real usage patterns, not conservative estimates

- Cost attribution by namespace, team, and application

Agentic AI Infrastructure Readiness

Modern cloud-native infrastructure is the substrate on which AI agents run. Apica's platform-engineering-grade observability extends naturally to agentic workloads:

- Auto-discovery of AI agent-spawned microservices as they emerge dynamically in Kubernetes environments — no manual configuration required

- Elastic architecture handles the 10–100x telemetry volume growth that production AI agents generate without performance penalties or cost spikes

- Pipeline-first telemetry governance routes AI agent traces and logs to cost-optimized storage before expensive indexing. The same cost control that platform teams apply to traditional workloads, extended to AI

- Forge's high-cardinality metrics engine handles the extreme dimensionality of AI agent workloads, including per-agent, per-model, and per-request metric streams, at enterprise scale

Platform Teams That Operate with Confidence

Results based on Apica customer deployments. Individual results may vary based on environment complexity and implementation scope.

Global Fintech: Platform Engineering Team

Managing 500+ microservices across AWS and GCP with traditional monitoring tools unable to track ephemeral Kubernetes pods. Observability gaps causing 45-minute average incident detection times.

Apica Kubernetes-native monitoring with unified multi-cloud visibility and high cardinality metrics.

- 85% reduction in incident detection time (45 min → 7 min)

- Unified dashboard across AWS and GCP eliminated tool-switching during incidents

- 30% reduction in cloud spend through accurate resource utilization reporting

- Platform team reduced monitoring operational overhead by 60%

Results based on Apica customer deployments. Individual results may vary based on environment complexity and implementation scope.

E-Commerce Platform: Infrastructure Ops

Kubernetes deployment with 200+ ephemeral pods. High cardinality explosion caused Prometheus to fail under load, creating monitoring blackouts during peak traffic.

Apica high cardinality metrics platform replacing Prometheus for Kubernetes-scale telemetry.

- 100% monitoring coverage maintained during peak traffic events — zero blackouts

- Handled 50M+ unique metric streams without performance degradation

- Resource optimization identified $180K annual cloud spend savings

- Alert noise reduced 70% through smart cardinality-aware aggregation

Built for Platform Engineering at Scale — Including the AI Workloads Running on Your Infrastructure

Unlike traditional monitoring tools retrofitted for Kubernetes, Apica was designed from the ground up for the realities of cloud-native platform operations. And because Apica's elastic, high-cardinality architecture was built for the most demanding container orchestration environments, it's already the infrastructure foundation that agentic AI workloads require.

Kubernetes-Native by Design

Not a traditional tool retrofitted for containers — purpose-built for dynamic, ephemeral workloads. Automatic discovery and monitoring without manual configuration as your infrastructure scales.

High Cardinality Without Compromise

Handle millions of unique metric streams from modern Kubernetes deployments. No artificial limits that force you to choose between observability completeness and cost.

True Multi-Cloud Visibility

Consistent observability across AWS, Azure, GCP, and on-premises infrastructure. One platform, one view, one alerting system — regardless of where workloads run.

Elastic Scalability

Monitoring that scales instantly to match your infrastructure — peak traffic events, deployment surges, and auto-scaling don't create observability gaps or cost spikes.

Agentic-Ready Infrastructure

The same Kubernetes-native, elastic, high-cardinality architecture that handles modern container orchestration is exactly what agentic AI infrastructure demands. AI agents spawn new microservices dynamically, generate 10–100x more telemetry than traditional workloads, and require the auto-discovery and cost-efficient pipeline governance that Apica was built to provide. Platform teams that standardize on Apica today won't need to rebuild their observability foundation when AI agents reach production scale.

Go Deeper

Related blog posts, product pages, and documentation for platform engineering teams.

Apica Flow

Control and route telemetry before ingestion with zero data loss

Explore Flow →

Apica Fleet

Centrally manage telemetry agents across hybrid environments

Explore Fleet →

Apica Forge

Real-time high-cardinality metrics with SLO insights

Explore Fleet →

OpenTelemetry: The Future of Observability with Advanced Tracing and Metrics

Read article →

What is OpenTelemetry? A Comprehensive Guide

Read article →

High-Cardinality Metrics at Scale

Store billions of unique metric streams without performance penalties

Read use case →