Apica Forge

Where Your Metrics Become the Foundation for Agentic Intelligence

Store Billions of Metrics. Query Instantly. Never Compromise.

Flow governs the data. Lake stores the telemetry. Forge powers the metrics.

While Lake stores all observability data at object storage economics, Forge is purpose-built for one thing legacy platforms can’t handle: Extreme-scale, high-cardinality metrics at machine speed. A distributed time-series database engineered for resiliency, simplistic operation, and embedded analytics, Forge stores billions of unique metric streams, token costs, agent latencies, SLI/SLO signals, multi-agent call rates, without performance degradation or cost explosion.

Because Forge is distributed and continuously replicating, your metrics remain available and queryable even during node failures or maintenance windows. No gaps in agent telemetry. No alerting blind spots. No capacity planning. Just the metrics backbone your agentic infrastructure depends on.

Is Limited Visibility, Data Volume, and Query Performance Hindering Your IT Monitoring?

Cardinality Pricing Traps

Query Performance Degradation

Exponential Cost Scaling

Premature Aggregation

Tag Anxiety

Vendor Lock-In

What Forge Does

A production-validated TSDB engineered for unlimited cardinality, millisecond query performance, and cloud-native scale.

High-Cardinality Metrics at Petabyte Scale

Continuous Operations Under Failure

Embedded Analytics and Computation

Open Ecosystem, Zero Lock-In

Purpose-Built for Modern Infrastructure Scale

Why Forge

From eliminating cardinality traps to millisecond query performance — built for modern cloud-native monitoring.

Unlimited Cardinality Without Cost Explosion

Millisecond Query Performance at Scale

Enhanced Monitoring Visibility

Seamless Data Integration

High Availability by Design

Tag Freely

Cloud-Native Architecture

Open Integrations

Production-Validated at Enterprise Scale

Enterprise Cloud-Native Platform

Large-scale Kubernetes deployment generating tens of millions of unique metric streams. Existing platform imposing cardinality limits and forcing tag dimensionality compromises that degraded incident response.

Results:

- 10–15× increase in metric cardinality without performance impact

- Eliminated all tag dimensionality compromises — every label retained

- 60–70% reduction in metrics infrastructure costs

- Engineers no longer self-censoring labels to stay within cardinality budgets

Multi-Cloud Enterprise (AWS/Azure/GCP)

Hybrid multi-cloud infrastructure with Istio/Envoy service mesh generating extreme tag cardinality. Prometheus federation approach couldn’t scale past 30-day retention across 200+ instances.

Results:

- Centralized metrics from 200+ Prometheus instances across 3 cloud providers

- Extended data retention from 30 days to 2 years without storage cost explosion

- Enabled cross-cloud capacity planning and year-over-year trend analysis

- Sub-100ms query latency maintained across entire multi-cloud footprint

Frequently Asked Questions

How does Apica Forge handle high-cardinality workloads differently than traditional TSDBs?

What does "histogram-native storage" mean in practice?

Can Apica Forge replace our existing Prometheus setup?

Is Apica Forge available on-premises, in the cloud, or both?

What does Apica Forge's pricing model look like?

Does Apica Forge integrate with Grafana and our existing dashboards?

Works With Your Existing Stack

Graphite

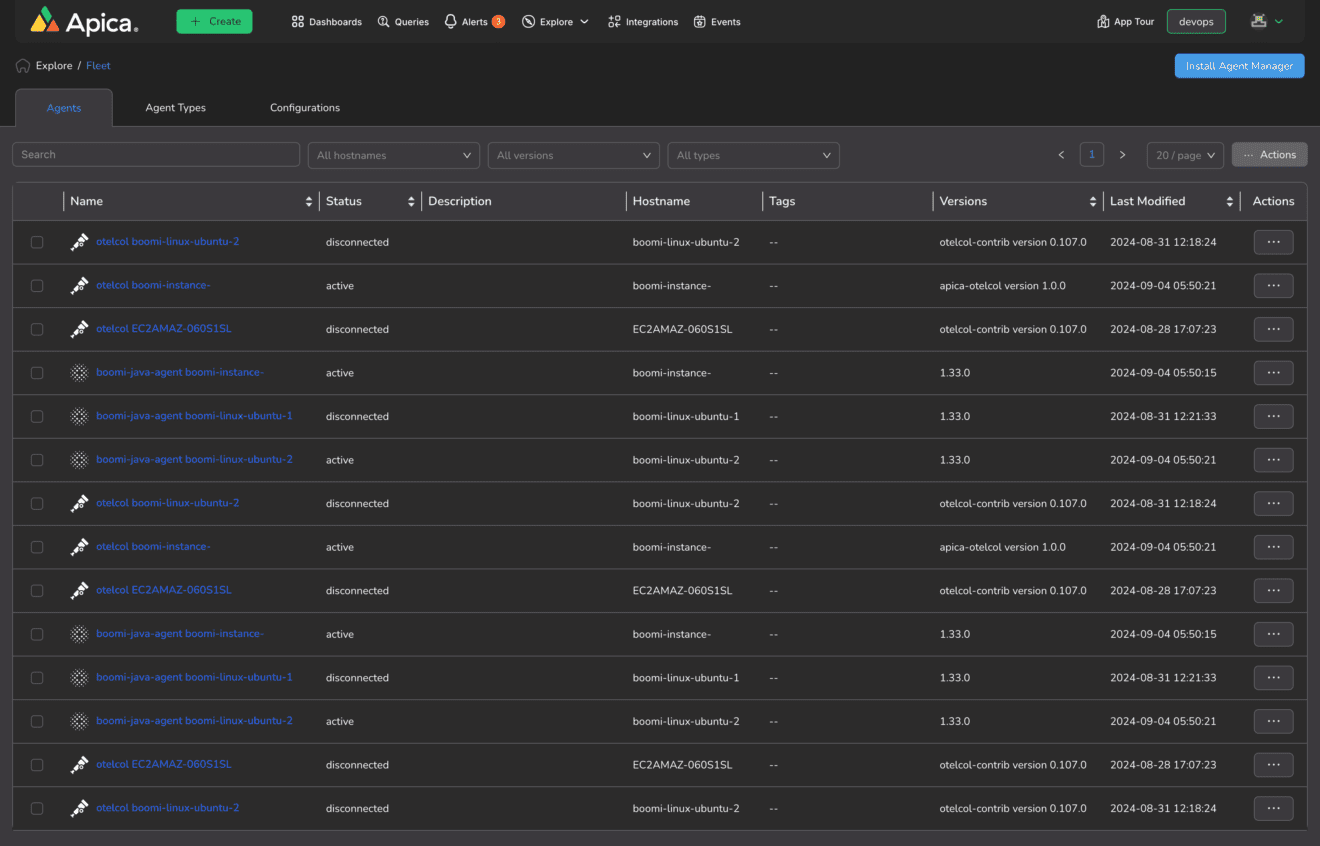

OpenTelemetry

Prometheus

Telegraf

Grafana

StatsD

collectd

Kubernetes

Don’t see your metrics tool? Forge integrates with any metrics collection tool that supports open standards — no proprietary formats required.